WARP Project Forums - Wireless Open-Access Research Platform

You are not logged in.

#1 2018-Dec-06 13:08:11

- Yan Wang

- Member

- Registered: 2018-Oct-25

- Posts: 21

802.11 Reference Design

Hello,

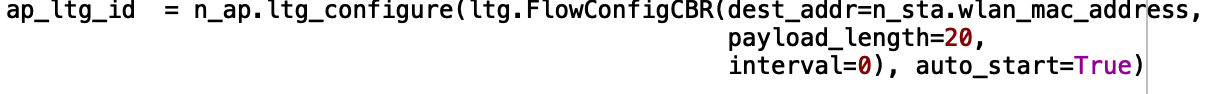

I have a question when I run the experiment log_capture_two_node_two_flow.py. What it the unit of the interval as displayed in the figure?

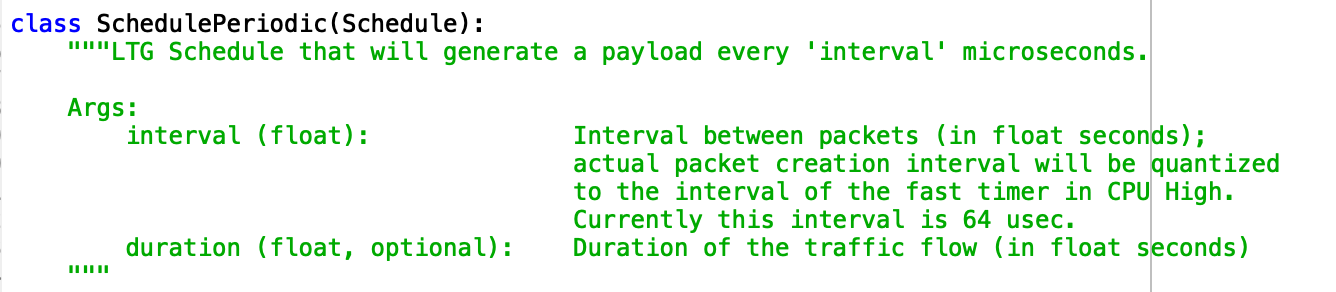

When I saw the source code as shown in the figure. It says the unit is microseconds, but when I set the interval as 10000 which is 10ms, I found that the code does not allow and will report an error.

Offline

#2 2018-Dec-06 13:36:09

- murphpo

- Administrator

- From: Mango Communications

- Registered: 2006-Jul-03

- Posts: 5159

Re: 802.11 Reference Design

The Python interface to creating LTG flows uses float seconds for the interval and duration arguments. So a 10msec packet creation interval would be 'interval=10e-3'. The Python code converts the float seconds to microseconds when constructing the over-the-wire command message to the node.

Offline

#3 2018-Dec-07 15:51:10

- Yan Wang

- Member

- Registered: 2018-Oct-25

- Posts: 21

Re: 802.11 Reference Design

murpo wrote:

The Python interface to creating LTG flows uses float seconds for the interval and duration arguments. So a 10msec packet creation interval would be 'interval=10e-3'. The Python code converts the float seconds to microseconds when constructing the over-the-wire command message to the node.

Hello,

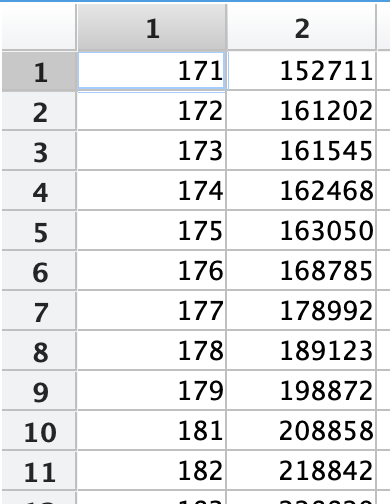

I have set the interval as 10e-3 and capture the log data again, but I still met a problem. As can be seen in the figure, the first column is the mac_sequency of data received in the STA node, the second column is the timestamp for each received data. The two columns of data correspond to each other. It can be seen that the AP send each of the packets to STA did not wait for 10ms until the sixth packet. Why did that happen?

Offline

#4 2018-Dec-08 14:15:37

- murphpo

- Administrator

- From: Mango Communications

- Registered: 2006-Jul-03

- Posts: 5159

Re: 802.11 Reference Design

The LTG interval controls the rate at which the LTG code creates and enqueues packets. The actual over-the-air Tx timing is controlled by the lower MAC. In this case it is likely the lower MAC has deferred transmissions (maybe due to medium activity, or previous Tx losses). Then, when the lower MAC resumes transmitting the LTG packets are de-queued as quickly as possible until the Tx queue is empty.

Offline